安装配置jdk,SSH

一.首先,先搭建五台小集群,虚拟机的话,创建五个

下面为这五台机器分别分配IP地址及相应的角色:集群有个特点,三五机子用户名最好一致,要不你就创建一个组,把这些用户放到组里面去,我这五台的用户名都是hadoop,主机名随意起

192.168.0.25-----namenode1(主机),主机namenode,zookeeper,journalnode,zkfc----namenode1(主机名)

192.168.0.26-----namenode2(从机),备机namenode,zookeeper,journalnode,zkfc-----namenode2(主机名)

192.168.0.27-----datanode(从机),datanode,zookeeper,journalnode-----datanode(主机名)

192.168.0.28-----datanode2(从机),datanode,zookeeper,journalnode-----datanode2(主机名)

192.168.0.29-----datanode3(从机),datanode,zookeeper,journalnode-----datanode3(主机名)

如果用户名不一致,你就要创建一个用户组,把用户放到用户组下面:

sudo addgroup hadoop 创建hadoop用户组

sudo adduser -ingroup hadoop one 创建一个one用户,归到hadoop组下

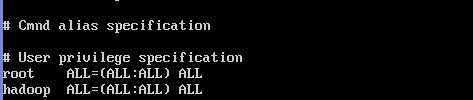

二.由于用户是普通用户,没有root一些权限,所以修改hadoop用户权限

用root权限,修改sudoers文件

nano /etc/sudoers 打开文件,修改hadoop用户权限,如果你创建的是one用户,就one ALL=(ALL:ALL) ALL

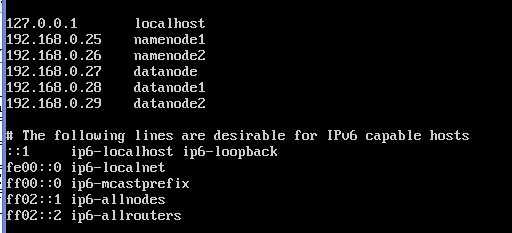

三.在这五台机子上分别设置/etc/hosts及/etc/hostname

hosts这个文件用于定于主机名与IP地址之间的对用关系

sudo -i 获取最高权限

nano /etc/hosts:

ctrl+o:保存,然后回车,ctrl+x:退出

hostname 这个文件用于定义主机名的,

注意:主机是主机名,从机就是从机名,例如:datanode在这里就是datanode

然后你可以输入:ping namenode2,看能不能ping通

四.要在这五台机子上均安装jdk,ssh,并配置好环境变量,五台机子都是这个操作::

做好准备工作,下载jdk-7u3-linux-i586.tar 这个软件包

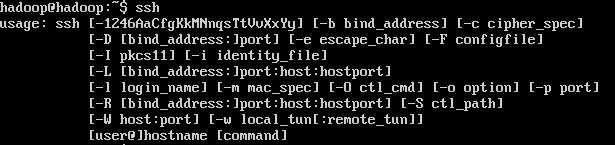

1.sudo apt-get install openssh-server 下载SSH

ssh 查看,代表安装成功

2. tar zxvf jdk-7u3-linux-i586.tar.gz 解压jdk

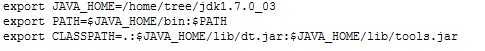

3.sudo nano /etc/profile,在最下面加入这几句话,保存,这是配置java环境变量

4.source /etc/profile 使其配置生效

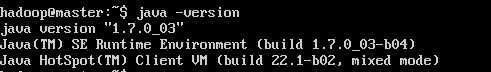

验证jdk是否安装成功,敲命令

5.java -version 可以看到JDK版本信息,代表安装成功

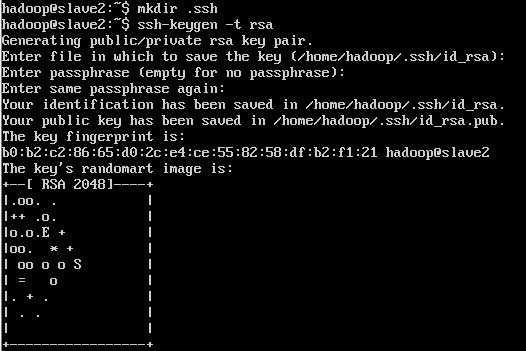

6:配置SSH 免密码登陆,记住,这是在hadoop用户下执行的

ssh-keygen -t rsa 之后一路回 车(产生秘钥,会自动产生一个.ssh文件

8.cd .ssh 进入ssh文件

cp id_rsa.pub authorized_keys 把id_rsa.pub 追加到授权的 key 里面去

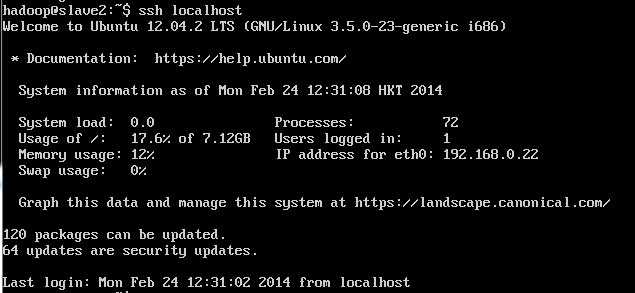

9. ssh localhost 此时已经可以进行ssh localhost的无密码登陆

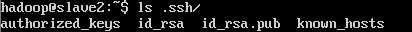

或者 ls .ssh/ 看看有没有那几个文件

10.拷贝id_rsa.pub文件到其他机器

192.168.0.25 操作:

scp .ssh/id_rsa.pub 192.168.0.26:/home/hadoop/.ssh/25.pub

scp .ssh/id_rsa.pub 192.168.0.27:/home/hadoop/.ssh/25.pub

scp .ssh/id_rsa.pub 192.168.0.28:/home/hadoop/.ssh/25.pub

scp .ssh/id_rsa.pub 192.168.0.29:/home/hadoop/.ssh/25.pub

192.168.0.26 操作:

scp .ssh/id_rsa.pub 192.168.0.25:/home/hadoop/.ssh/26.pub

scp .ssh/id_rsa.pub 192.168.0.27:/home/hadoop/.ssh/26.pub

scp .ssh/id_rsa.pub 192.168.0.28:/home/hadoop/.ssh/26.pub

scp .ssh/id_rsa.pub 192.168.0.29:/home/hadoop/.ssh/26.pub

192.168.0.27 操作:

scp .ssh/id_rsa.pub 192.168.0.25:/home/hadoop/.ssh/27.pub

scp .ssh/id_rsa.pub 192.168.0.26:/home/hadoop/.ssh/27.pub

scp .ssh/id_rsa.pub 192.168.0.28:/home/hadoop/.ssh/27.pub

scp .ssh/id_rsa.pub 192.168.0.29:/home/hadoop/.ssh/27.pub

192.168.0.28 操作:

scp .ssh/id_rsa.pub 192.168.0.25:/home/hadoop/.ssh/28.pub

scp .ssh/id_rsa.pub 192.168.0.26:/home/hadoop/.ssh/28.pub

scp .ssh/id_rsa.pub 192.168.0.27:/home/hadoop/.ssh/28.pub

scp .ssh/id_rsa.pub 192.168.0.29:/home/hadoop/.ssh/28.pub

192.168.0.29 操作:

scp .ssh/id_rsa.pub 192.168.0.25:/home/hadoop/.ssh/29.pub

scp .ssh/id_rsa.pub 192.168.0.26:/home/hadoop/.ssh/29.pub

scp .ssh/id_rsa.pub 192.168.0.27:/home/hadoop/.ssh/29.pub

scp .ssh/id_rsa.pub 192.168.0.28:/home/hadoop/.ssh/29.pub

11.公钥都追加到 那个授权文件里

在192.168.0.25机子上操作:

cat .ssh/26.pub >> .ssh/authorized_keys

cat .ssh/27.pub >> .ssh/authorized_keys

cat .ssh/28.pub >> .ssh/authorized_keys

cat .ssh/29.pub >> .ssh/authorized_keys

在192.168.0.26机子上操作:

cat .ssh/25.pub >> .ssh/authorized_keys

cat .ssh/27.pub >> .ssh/authorized_keys

cat .ssh/28.pub >> .ssh/authorized_keys

cat .ssh/29.pub >> .ssh/authorized_keys

在192.168.0.27机子上操作:

cat .ssh/25.pub >> .ssh/authorized_keys

cat .ssh/26.pub >> .ssh/authorized_keys

cat .ssh/28.pub >> .ssh/authorized_keys

cat .ssh/29.pub >> .ssh/authorized_keys

验证ssh 192.168.0.26 hostname

namenode2

搭建Zookeeper集群

1.下载zookeeper-3.4.5版本:zookeeper-3.4.5.tar.gz,我是放在/home/hadoop下面

tar zxvf zookeeper-3.4.5.tar.gz 直接进行解压

2.配置etc/profile

sudo nano etc/profile 在末尾加入下面配置

export ZOOKEEPER_HOME=/home/hadoop/zookeeper-3.4.5

export PATH=$ZOOKEEPER_HOME/bin:$ZOOKEEPER_HOME/conf:$PATH

source /etc/profile 使其配置生效

3.配置zookeeper-3.4.5/conf/zoo.cfg文件,这个文件本身是没有的,有个zoo_sample.cfg模板

cd zookeeper-3.4.5/conf 进入conf目录

cp zoo_sample.cfg zoo.cfg 拷贝模板

sudo nano zoo.cfg 修改zoo.cfg文件,红色是修改部分

---------------------------------------------------------------------------------------------------

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=/home/hadoop/zookeeper-3.4.5/data

# the port at which the clients will connect

clientPort=2181

#

# Be sure to read the maintenance section of the # administrator guide before turning on autopurge.

#

# http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

#autopurge.purgeInterval=1

server.1=namenode1:2888:3888

server.2=namenode2:2888:3888

server.3=datanode:2888:3888

server.4=datanode1:2888:3888

server.5=datanode2:2888:3888

------------------------------------------------------------------------------------------------------

注意:创建dataDir参数指定的目录,创建data文件夹,在这个文件夹下,还要创建一个文本myid

cd /home/hadoop/zookeeper-3.4.5

mkdir data 创建data

cd /home/hadoop/zookeeper-3.4.5/data 进入data文件夹下

touch myid 创建文本myid,在这个文本内写入1,因为server.1=namenode1:2888:3888 server指定的是1,

如果一会在其余机子配置,namenode2下面的myid是2,datanode下面myid是3,

datanode1下面myid是4,datanode下面myid是5,这些都是根据server来的

4.主机配置完以后,把zookeeper复制给其余机子

scp -r zookeeper-3.4.5 hadoop@namenode2:/home/hadoop/

scp -r zookeeper-3.4.5 hadoop@datanode:/home/hadoop/

scp -r zookeeper-3.4.5 hadoop@datanode1:/home/hadoop/

scp -r zookeeper-3.4.5 hadoop@datanode2:/home/hadoop/

记住:::::修改从机的myid.从机也要配置etc/profile

5.启动zookeeper,先hadoop集群启动

zkServer.sh start 这个启动是主机从机都要输入启动命令

bin/zkServer.sh status 在不同的机器上使用该命令,其中二台显示follower,一台显示leader

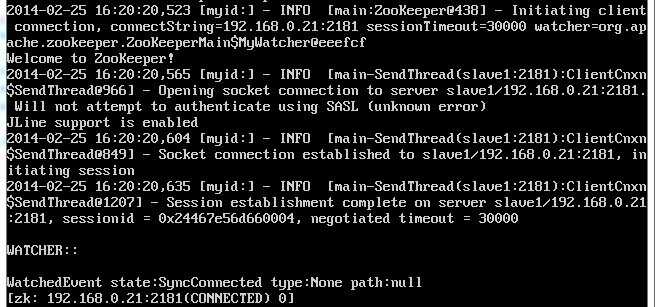

zkCli.sh -server 192.168.0.26:2181 启动客户端脚本

quit 退出

help 可是查看帮助命令

这样zookeeper集群就配置完了

配置hadoop集群2.2.0版本HDFS的HA配置

1.我把hadoop文件放在 /home/hadoop路径下,首先先进行解压

tar zxvf hadoop-2.2.0.tar.gz

2.hadoop配置过程,

配置之前,需要在hadoop本地文件系统创建以下文件夹:

/dfs/name

/dfs/data

/tmp/journal

给这些文件要赋予权限

sudo chmod 777 tmp/

sudo chmod 777 dfs/

这里要涉及到的配置文件有7个:

~/hadoop-2.2.0/etc/hadoop/hadoop-env.sh

~/hadoop-2.2.0/etc/hadoop/yarn-env.sh

~/hadoop-2.2.0/etc/hadoop/slaves

~/hadoop-2.2.0/etc/hadoop/core-site.xml

~/hadoop-2.2.0/etc/hadoop/hdfs-site.xml

~/hadoop-2.2.0/etc/hadoop/mapred-site.xml

~/hadoop-2.2.0/etc/hadoop/yarn-site.xml

以上个别文件默认不存在的,可以复制相应的template文件获得。

例如mapred-site.xml不存在

cd /home/hadoop/hadoop-2.2.0/etc/hadoop 进入到hadoop配置文件的目录中

cp mapred-site.xml.template mapred-site.xml 复制相应的模板文件

3.配置hadoop-env.sh

sudo nano /home/hadoop/hadoop-2.2.0/etc/hadoop/hadoop-env.sh

export JAVA_HOME=/home/hadoop/jdk1.7.0_03 配置jdk

4.配置yarn-env.sh

sudo nano /home/hadoop/hadoop-2.2.0/etc/hadoop/yarn-env.sh

export JAVA_HOME=/home/hadoop/jdk1.7.0_03 配置jdk

5.配置slaves,写入一下内容

datanode

datanode1

datanode2

6.配置core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://mycluster</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>131072</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/home/hadoop/tmp</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.groups</name>

<value>*</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>192.168.0.25:2181,192.168.0.26:2181,192.168.0.27:2181,192.168.0.28:2181,192.168.0.29:2181</value>

</property>

<property>

<name>ha.zookeeper.session-timeout.ms</name>

<value>1000</value>

</property>

</configuration>

7.配置hdfs-site.xml

<configuration>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/home/hadoop/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/home/hadoop/dfs/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

<property>

<name>dfs.permissions.enabled</name>

<value>false</value>

</property>

<property>

<name>dfs.nameservices</name>

<value>mycluster</value>

</property>

<property>

<name>dfs.ha.namenodes.mycluster</name>

<value>nn1,nn2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1</name>

<value>192.168.0.25:9000</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2</name>

<value>192.168.0.26:9000</value>

</property>

<property>

<name>dfs.namenode.servicerpc-address.mycluster.nn1</name>

<value>192.168.0.25:53310</value>

</property>

<property>

<name>dfs.namenode.servicerpc-address.mycluster.nn2</name>

<value>192.168.0.26:53310</value>

</property>

<property>

<name>dfs.namenode.http-address.mycluster.nn1</name>

<value>192.168.0.25:50070</value>

</property>

<property>

<name>dfs.namenode.http-address.mycluster.nn2</name>

<value>192.168.0.26:50070</value>

</property>

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://192.168.0.25:8485;192.168.0.26:8485;192.168.0.27:8485/mycluster</value>

</property>

<property>

<name>dfs.client.failover.proxy.provider.mycluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/home/hadoop/.ssh/id_rsa</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.connect-timeout</name>

<value>30000</value>

</property>

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/home/hadoop/tmp/journal</value>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<property>

<name>ha.failover-controller.cli-check.rpc-timeout.ms</name>

<value>60000</value>

</property>

<property>

<name>ipc.client.connect.timeout</name>

<value>60000</value>

</property>

<property>

<name>dfs.image.transfer.bandwidthPerSec</name>

<value>4194304</value>

</property>

</configuration>

8.配置mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>192.168.0.25:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>192.168.0.25:19888</value>

</property>

</configuration>

9.配置yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>192.168.0.25:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>192.168.0.25:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>192.168.0.25:8031</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>192.168.0.25:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>192.168.0.25:8088</value>

</property>

</configuration>

master配置完以后,可是直接把hadoop文件复制到从机,这样可以节省时间

命令是在hadoop用户下进行:这个只需要在主机运行就可以了

scp -r hadoop-2.2.0 hadoop@namenode2:/home/hadoop/

scp -r hadoop-2.2.0 hadoop@datanode:/home/hadoop/

scp -r hadoop-2.2.0 hadoop@datanode1:/home/hadoop/

scp -r hadoop-2.2.0 hadoop@datanode2:/home/hadoop/

0、首先把各个zookeeper起来,如果zookeeper集群还没有启动的话。

./bin/zkServer.sh start 记住每台机子都要启动

1、然后在某一个namenode节点执行如下命令,创建命名空间

./bin/hdfs zkfc -formatZK

2、在每个节点用如下命令启日志程序

./sbin/hadoop-daemon.sh start journalnode

3、在主namenode节点用./bin/hadoopnamenode -format格式化namenode和journalnode目录

./bin/hadoop namenode -format mycluster

4、在主namenode节点启动./sbin/hadoop-daemon.shstart namenode进程

./sbin/hadoop-daemon.sh start namenode

5、在备节点执行第一行命令,这个是把备namenode节点的目录格式化并把元数据从主namenode节点copy过来,并且这个命令不会把journalnode目录再格式化了!然后用第二个命令启动备namenode进程!

./bin/hdfs namenode –bootstrapStandby

./sbin/hadoop-daemon.sh start namenode

6、在两个namenode节点都执行以下命令

./sbin/hadoop-daemon.sh start zkfc

7、在所有datanode节点都执行以下命令启动datanode

./sbin/hadoop-daemon.sh start datanode

下次启动的时候,就直接执行以下命令就可以全部启动所有进程和服务了:

./sbin/start-dfs.sh

然后访问以下两个地址查看启动的两个namenode的状态:

http://192.168.0.25:50070/dfshealth.jsp

http://192.168.0.26:50070/dfshealth.jsp

停止所有HDFS相关的进程服务,执行以下命令:

./sbin/stop-dfs.sh

在任意一台namenode机器上通过jps命令查找到namenode的进程号,然后通过kill -9的方式杀掉进程,观察另一个namenode节点是否会从状态standby变成active状态。

hd@hd0:/opt/hadoop/apps/hadoop$ jps

1686 JournalNode

1239 QuorumPeerMain

1380 NameNode

2365 Jps

1863 DFSZKFailoverController

hd@hd0:/opt/hadoop/apps/hadoop$ kill -9 1380

然后观察原来是standby状态的namenode机器的zkfc日志,若最后一行出现如下日志,则表示切换成功:

2013-12-31 16:14:41,114 INFOorg.apache.hadoop.ha.ZKFailoverController: Successfully transitioned NameNodeat hd0/192.168.0.25:53310 to active state

这时再通过命令启动被kill掉的namenode进程

./sbin/hadoop-daemon.sh start namenode

对应进程的zkfc最后一行日志如下:

2013-12-31 16:14:55,683 INFOorg.apache.hadoop.ha.ZKFailoverController: Successfully transitioned NameNodeat hd2/192.168.0.26:53310 to standby state

可以在两台namenode机器之间来回kill掉namenode进程以检查HDFS的HA配置!

第九章 搭建Hadoop 2.2.0版本HDFS的HA配置,布布扣,bubuko.com

原文:http://www.cnblogs.com/junrong624/p/3580477.html