在用keras学习DCGAN网络的时候遇到如下的错误代码:

tensorflow.python.framework.errors_impl.FailedPreconditionError: Error while reading resource variable _AnonymousVar33 from Container: localhost. This could mean that the variable was uninitialized. Not found: Resource localhost/_AnonymousVar33/N10tensorflow3VarE does not exist.

[[node mul_1/ReadVariableOp (defined at /Users/xxx/anaconda3/envs/tensorflow/lib/python3.6/site-packages/tensorflow_core/python/framework/ops.py:1751) ]] [Op:__inference_keras_scratch_graph_2262]

Function call stack:

keras_scratch_graph

这是因为作者的代码是用tensorflow 1.x的版本写的,而我们本地的环境是tensorflow2.0及以上,出现了不兼容问题,可以解决的一种方法是在头部添加以下代码:

通过对tensorflow2.0降级的方式来运行代码。

当然也可以通过对旧代码更改,调用tf.Session.run()方法的方式来使旧代码适配新的tensorflow版本,相关资料较多此处不做详细介绍。

---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

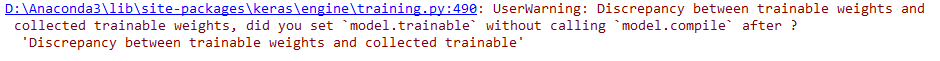

在学习DCGAN时,遇到如下警告:

报错位置:[line 138] d_loss_real = self.discriminator.train_on_batch(imgs, valid)

问题的官网描述:在实例化之后将网络层的 trainable 属性设置为 True 或 False。为了使之生效,在修改 trainable 属性之后,需要在模型上调用 compile()。

构造一个新的frozen_D 替代 combined 中的 discriminator 。

参考keras DCGAN中的代码。

代码基于 eriklindernoren/Keras-GAN ,并修改了trainable与compile 易于混淆的代码。

from keras.datasets import mnist

from keras.layers import Input, Dense, Reshape, Flatten, Dropout

from keras.layers import BatchNormalization, Activation, ZeroPadding2D

from keras.layers.advanced_activations import LeakyReLU

from keras.layers.convolutional import UpSampling2D, Conv2D

from keras.models import Sequential, Model

from keras.optimizers import Adam

import matplotlib.pyplot as plt

import numpy as np

class DCGAN():

def __init__(self):

# Input shape

self.img_rows = 28

self.img_cols = 28

self.channels = 1

self.img_shape = (self.img_rows, self.img_cols, self.channels)

self.latent_dim = 100

optimizer = Adam(0.0002, 0.5)

base_generator = self.build_generator()

base_discriminator = self.build_discriminator()

########

self.generator = Model(

inputs=base_generator.inputs,

outputs=base_generator.outputs)

self.discriminator = Model(

inputs=base_discriminator.inputs,

outputs=base_discriminator.outputs)

self.discriminator.compile(loss=‘binary_crossentropy‘,

optimizer=optimizer,

metrics=[‘accuracy‘])

frozen_D = Model(

inputs=base_discriminator.inputs,

outputs=base_discriminator.outputs)

frozen_D.trainable = False

z = Input(shape=(self.latent_dim,))

img = self.generator(z)

valid = frozen_D(img)

self.combined = Model(z, valid)

self.combined.compile(loss=‘binary_crossentropy‘, optimizer=optimizer)

def build_generator(self):

model = Sequential()

model.add(

Dense(

128 * 7 * 7,

activation="relu",

input_dim=self.latent_dim))

model.add(Reshape((7, 7, 128)))

model.add(UpSampling2D())

model.add(Conv2D(128, kernel_size=3, padding="same"))

model.add(BatchNormalization(momentum=0.8))

model.add(Activation("relu"))

model.add(UpSampling2D())

model.add(Conv2D(64, kernel_size=3, padding="same"))

model.add(BatchNormalization(momentum=0.8))

model.add(Activation("relu"))

model.add(Conv2D(self.channels, kernel_size=3, padding="same"))

model.add(Activation("tanh"))

model.summary()

return model

def build_discriminator(self):

model = Sequential()

model.add(

Conv2D(

32,

kernel_size=3,

strides=2,

input_shape=self.img_shape,

padding="same"))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Conv2D(64, kernel_size=3, strides=2, padding="same"))

model.add(ZeroPadding2D(padding=((0, 1), (0, 1))))

model.add(BatchNormalization(momentum=0.8))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Conv2D(128, kernel_size=3, strides=2, padding="same"))

model.add(BatchNormalization(momentum=0.8))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Conv2D(256, kernel_size=3, strides=1, padding="same"))

model.add(BatchNormalization(momentum=0.8))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Flatten())

model.add(Dense(1, activation=‘sigmoid‘))

model.summary()

return model

def train(self, epochs, batch_size, save_interval, log_interval):

# Load the dataset

(X_train, _), (_, _) = mnist.load_data()

# Rescale -1 to 1

X_train = X_train / 127.5 - 1.

X_train = np.expand_dims(X_train, axis=3)

# Adversarial ground truths

valid = np.ones((batch_size, 1))

fake = np.zeros((batch_size, 1))

logs = []

for epoch in range(epochs):

# ---------------------

# Train Discriminator

# ---------------------

# Select a random half of images

idx = np.random.randint(0, X_train.shape[0], batch_size)

imgs = X_train[idx]

# Sample noise and generate a batch of new images

noise = np.random.normal(0, 1, (batch_size, self.latent_dim))

gen_imgs = self.generator.predict(noise)

# Train the discriminator (real classified as ones and generated as

# zeros)

d_loss_real = self.discriminator.train_on_batch(imgs, valid)

d_loss_fake = self.discriminator.train_on_batch(gen_imgs, fake)

d_loss = 0.5 * np.add(d_loss_real, d_loss_fake)

# ---------------------

# Train Generator

# ---------------------

# Train the generator (wants discriminator to mistake images as

# real)

g_loss = self.combined.train_on_batch(noise, valid)

if epoch % log_interval == 0:

logs.append([epoch, d_loss[0], d_loss[1], g_loss])

if epoch % save_interval == 0:

print("%d [D loss: %f, acc.: %.2f%%] [G loss: %f]" %

(epoch, d_loss[0], 100 * d_loss[1], g_loss))

self.save_imgs(epoch)

self.showlogs(logs)

def showlogs(self, logs):

logs = np.array(logs)

names = ["d_loss", "d_acc", "g_loss"]

for i in range(3):

plt.subplot(2, 2, i + 1)

plt.plot(logs[:, 0], logs[:, i + 1])

plt.xlabel("epoch")

plt.ylabel(names[i])

plt.tight_layout()

plt.show()

def save_imgs(self, epoch):

r, c = 5, 5

noise = np.random.normal(0, 1, (r * c, self.latent_dim))

gen_imgs = self.generator.predict(noise)

# Rescale images 0 - 1

gen_imgs = 0.5 * gen_imgs + 0.5

fig, axs = plt.subplots(r, c)

cnt = 0

for i in range(r):

for j in range(c):

axs[i, j].imshow(gen_imgs[cnt, :, :, 0], cmap=‘gray‘)

axs[i, j].axis(‘off‘)

cnt += 1

fig.savefig("images/mnist_%d.png" % epoch)

plt.close()

if __name__ == ‘__main__‘:

dcgan = DCGAN()

dcgan.train(epochs=4000, batch_size=32, save_interval=50, log_interval=10)

原文:https://www.cnblogs.com/sqm724/p/13906952.html