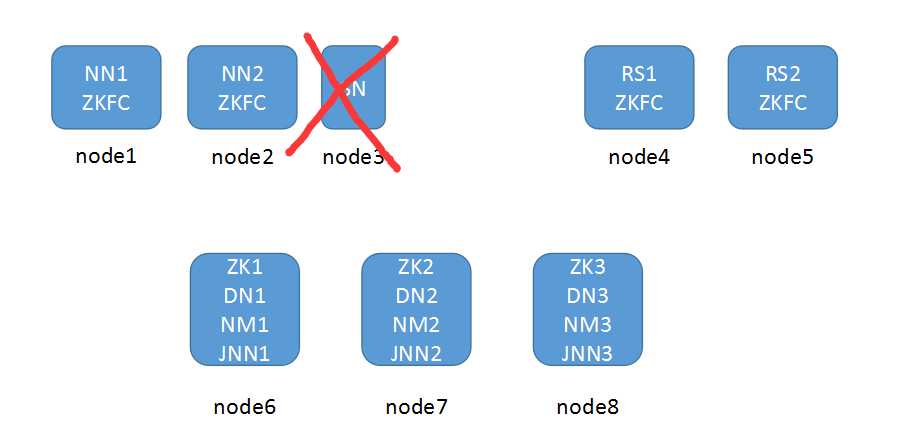

架构图(HA模型没有SNN节点)

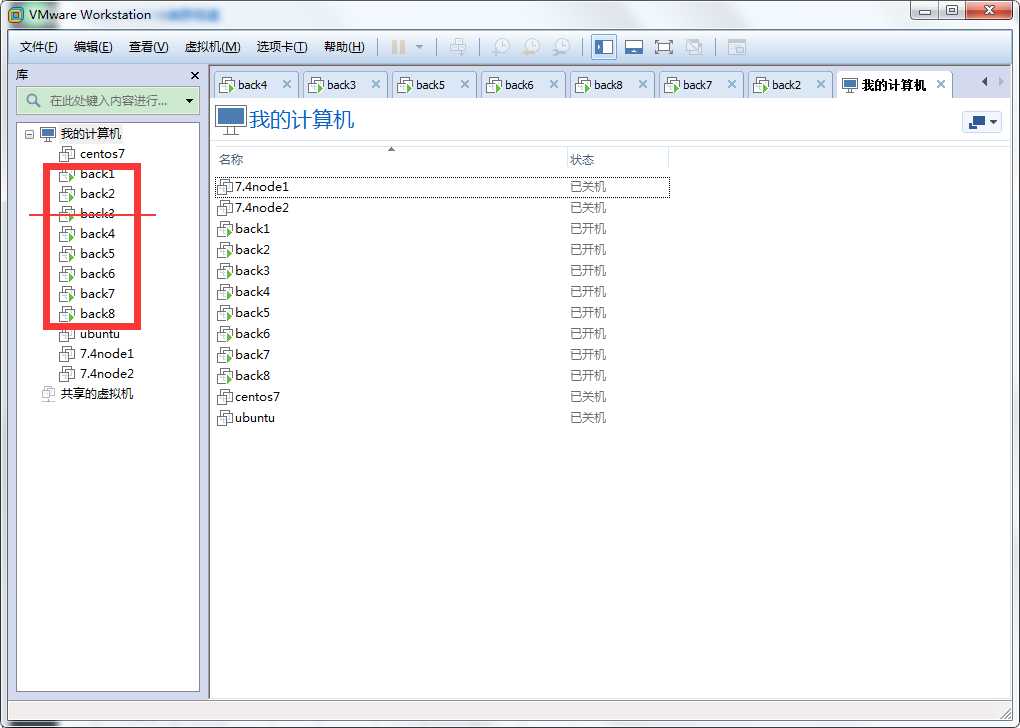

用vm规划了8台机器,用到了7台,SNN节点没用

|

|

NN

|

DN

|

SN

|

ZKFC

|

ZK

|

JNN

|

RM

|

NM

|

|

node1

|

*

|

|

|

*

|

|

|

|

|

|

node2

|

*

|

|

|

*

|

|

|

|

|

|

node3

|

|

|

|

|

|

|||

|

node4

|

|

|

|

*

|

|

|

*

|

|

|

node5

|

|

|

|

*

|

|

|

*

|

|

|

node6

|

|

*

|

|

|

*

|

*

|

|

*

|

|

node7

|

|

*

|

|

|

*

|

*

|

|

*

|

|

node8

|

|

*

|

|

|

*

|

*

|

|

*

|

集群搭建前准备工作:

*搭建集群之前需要关闭所有服务器的selinux和防火墙

1.更改所有服务器的主机名和hosts文件对应关系

[root@localhost ~]# hostnamectl set-hostname node1 [root@localhost ~]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.159.129 node1 192.168.159.130 node2 192.168.159.132 node3 192.168.159.133 node4 192.168.159.136 node5 192.168.159.137 node6 192.168.159.138 node7 192.168.159.139 node8

2.两个NameNode节点做对所有主机的免密登陆,包括自己的节点

[root@localhost ~]# ssh-keygen Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Created directory ‘/root/.ssh‘. Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:lIvGygyJHycNTZJ0KeuE/BM0BWGGq/UTgMUQNo7Qm2M root@node1 The key‘s randomart image is: +---[RSA 2048]----+ |+@=**o | |*.XB. . | |oo+*o o | |.+E=.. o . | |o=*o+.+ S | |...Xoo | | . =. | | | | | +----[SHA256]-----+ [root@localhost ~]# for i in `seq 1 8`;do ssh-copy-id root@node$i;done

3.同步所有服务器时间

[root@node1 ~]# ansible all -m shell -o -a ‘ntpdate ntp1.aliyun.com‘ node4 | CHANGED | rc=0 | (stdout) 20 Feb 16:08:37 ntpdate[2477]: adjust time server 120.25.115.20 offset 0.001546 sec node6 | CHANGED | rc=0 | (stdout) 20 Feb 16:08:37 ntpdate[2470]: adjust time server 120.25.115.20 offset 0.000220 sec node2 | CHANGED | rc=0 | (stdout) 20 Feb 16:08:37 ntpdate[2406]: adjust time server 120.25.115.20 offset -0.002414 sec node3 | CHANGED | rc=0 | (stdout) 20 Feb 16:08:37 ntpdate[2465]: adjust time server 120.25.115.20 offset -0.001185 sec node5 | CHANGED | rc=0 | (stdout) 20 Feb 16:08:37 ntpdate[2466]: adjust time server 120.25.115.20 offset 0.005768 sec node7 | CHANGED | rc=0 | (stdout) 20 Feb 16:08:43 ntpdate[2503]: adjust time server 120.25.115.20 offset 0.000703 sec node8 | CHANGED | rc=0 | (stdout) 20 Feb 16:08:43 ntpdate[2426]: adjust time server 120.25.115.20 offset -0.001338 sec

4.所有服务器安装JDK环境并配置好环境变量

[root@node1 ~]# tar -xf jdk-8u144-linux-x64.gz -C /usr/ [root@node1 ~]# ln -sv /usr/jdk1.8.0_144/ /usr/java "/usr/java" -> "/usr/jdk1.8.0_144/" [root@node1 ~]# cat /etc/profile.d/java.sh export JAVA_HOME=/usr/java export PATH=$PATH:$JAVA_HOME/bin [root@node1 ~]# source /etc/profile.d/java.sh [root@node1 ~]# java -version java version "1.8.0_144" Java(TM) SE Runtime Environment (build 1.8.0_144-b01) Java HotSpot(TM) 64-Bit Server VM (build 25.144-b01, mixed mode)

zookeeper集群搭建

在规划好的6、7、8节点上安装zookeeper(JDK环境要准备好)

#解压zookeeper程序到/usr目录下 [root@node6 ~]# tar xf zookeeper-3.4.6.tar.gz -C /usr/ #创建zookeeper存放数据目录 [root@node6 ~]# mkdir /usr/data/zookeeper #将zookeeper的conf目录下sample配置文件更改成cfg文件 [root@node6 ~]# cp /usr/zookeeper-3.4.6/conf/zoo_sample.cfg /usr/zookeeper-3.4.6/conf/zoo.cfg #编辑配置文件,更改数据存放目录,并添加zookeeper集群配置信息 [root@node6 ~]# vim /usr/zookeeper-3.4.6/conf/zoo.cfg dataDir=/usr/data/zookeeper #修改 server.1=node6:2888:3888 #添加 server.2=node7:2888:3888 #添加 server.3=node8:2888:3888 #添加 #把配置好的zookeeper程序文件分发至其余的两个节点 [root@node6 ~]# scp -r /usr/zookeeper-3.4.6/ node7:/usr/zookeeper-3.4.6/ [root@node6 ~]# scp -r /usr/zookeeper-3.4.6/ node8:/usr/zookeeper-3.4.6/ #在刚刚创建的目录下当前zookeeper节点信息,必须为数字,且三个节点不能相同 [root@node6 ~]# echo 1 > /usr/data/zookeeper/myid #在剩下的两个节点上也要创建数据存放目录和节点配置文件 [root@node7 ~]# mkdir /usr/data/zookeeper [root@node7 ~]# echo 2 > /usr/data/zookeeper/myid [root@node8 ~]# mkdir /usr/data/zookeeper [root@node8 ~]# echo 3 > /usr/data/zookeeper/myid #配置完成后启动zookeeper集群 [root@node6 ~]# /usr/zookeeper-3.4.6/bin/zkServer.sh start [root@node7 ~]# /usr/zookeeper-3.4.6/bin/zkServer.sh start [root@node8 ~]# /usr/zookeeper-3.4.6/bin/zkServer.sh start #查看集群启动情况(先启动的会成为leader,同时启动数字大的会成为leader) [root@node6 ~]# /usr/zookeeper-3.4.6/bin/zkServer.sh status JMX enabled by default Using config: /usr/zookeeper-3.4.6/bin/../conf/zoo.cfg Mode: follower [root@node7 ~]# /usr/zookeeper-3.4.6/bin/zkServer.sh status JMX enabled by default Using config: /usr/zookeeper-3.4.6/bin/../conf/zoo.cfg Mode: follower [root@node8 ~]# /usr/zookeeper-3.4.6/bin/zkServer.sh status JMX enabled by default Using config: /usr/zookeeper-3.4.6/bin/../conf/zoo.cfg Mode: leader [root@node8 ~]# netstat -tnlp | grep java #只有主节点有2888 tcp6 0 0 :::2181 :::* LISTEN 33766/java tcp6 0 0 192.168.159.139:2888 :::* LISTEN 33766/java tcp6 0 0 192.168.159.139:3888 :::* LISTEN 33766/java tcp6 0 0 :::43793 :::* LISTEN 33766/java

Hadoop集群搭建

1.先添加hadoop的环境变量

[root@node1 ~]# cat /etc/profile.d/hadoop.sh export HADOOP_HOME=/usr/hadoop-2.9.2 export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

2.解压hadoop程序包到/usr目录下

[root@node1 ~]# tar xf hadoop-2.9.2.tar.gz -C /usr [root@node1 ~]# ln -sv /usr/hadoop-2.9.2/ /usr/hadoop "/usr/hadoop" -> "/usr/hadoop-2.9.2/"

3.更改hadoop程序包内 hadoop-env.sh,mapred-env.sh,yarn-env.sh中的JAVA_HOME环境变量

[root@node1 ~]# grep ‘export JAVA_HOME‘ /usr/hadoop/etc/hadoop/{hadoop-env.sh,mapred-env.sh,yarn-env.sh}

/usr/hadoop/etc/hadoop/hadoop-env.sh:export JAVA_HOME=/usr/java

/usr/hadoop/etc/hadoop/mapred-env.sh:export JAVA_HOME=/usr/java

/usr/hadoop/etc/hadoop/yarn-env.sh:export JAVA_HOME=/usr/java

4.修改core-site.xml文件(NameNode配置文件)

[root@node1 ~]# vim /usr/hadoop/etc/hadoop/core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop</value>

<!--HA部署下,NameNode访问hdfs-site.xml中的dfs.nameservices值 -->

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/data/hadoop</value>

<!--Hadoop的文件存放目录 -->

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>node6:2181,node7:2181,node8:2181</value>

<!--zookeeper集群地址 -->

</property>

</configuration>

5.在所有hadoop节点创建/usr/data/hadoop目录

6.修改hdfs-site.xml文件

原文:https://www.cnblogs.com/forlive/p/12345508.html